The Big Picture

AI agents let language models carry out multi-step tasks across apps and devices, but success hinges on the surrounding architecture — controllers, memory, permissions, and verification — not just bigger models.

ON THIS PAGE

The Evidence

A practical architecture view organizes agent systems into six modular pieces (perception, memory, action, profiling, planning, and learning), showing how those parts are assembled for real tasks. Teams are moving from single-model call loops to controlled workflows and explicit orchestration graphs that improve debuggability and safety. Major failure modes are "hallucination in action" (models taking incorrect or dangerous actions), indirect prompt injection (malicious inputs disguised as data), and cascading errors across multi-step plans. Evaluation needs to measure cost, responsiveness, correctness, security, and stability together, because deeper reasoning often increases compute and failure risk. Memory Poisoning, Indirect prompt injection.

Not sure where to start?Get personalized recommendations

Data Highlights

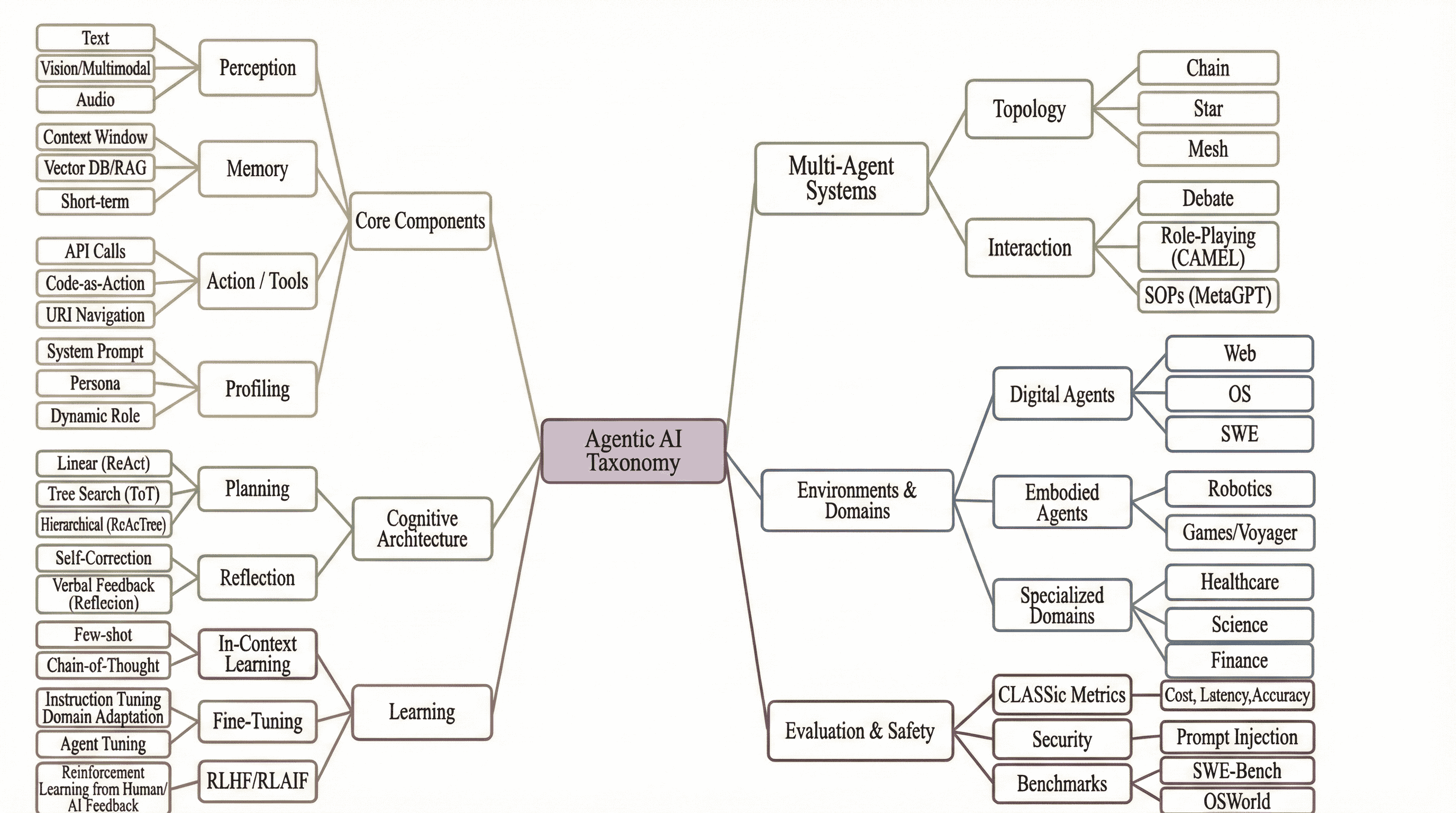

1Taxonomy breaks agent systems into 6 modular dimensions: core components, cognitive architecture, learning, multi-agent systems, environments, and evaluation.

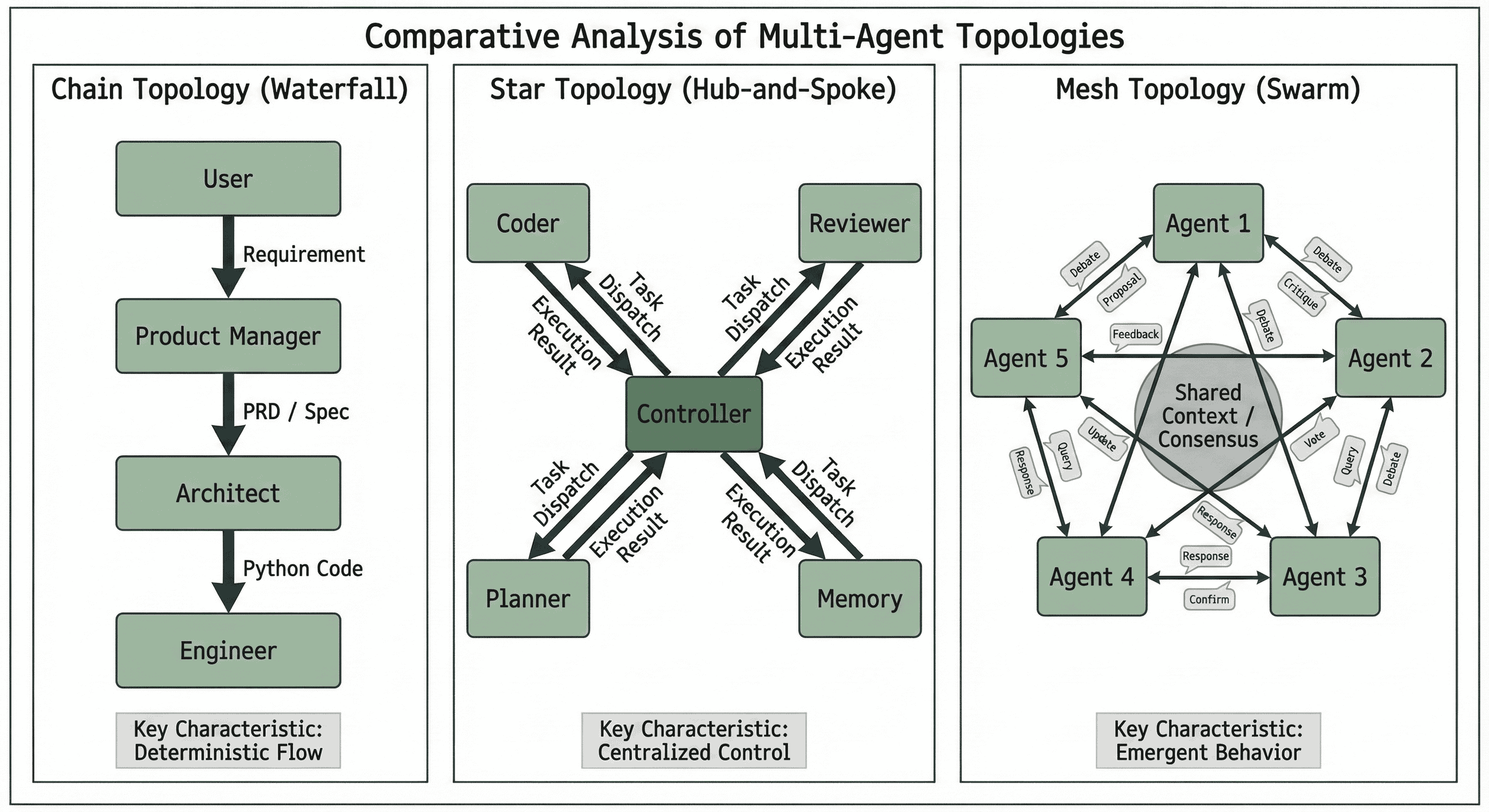

2Multi-agent interaction patterns compress into 3 main topologies: chain (sequential), star (central controller), and mesh (decentralized swarm).

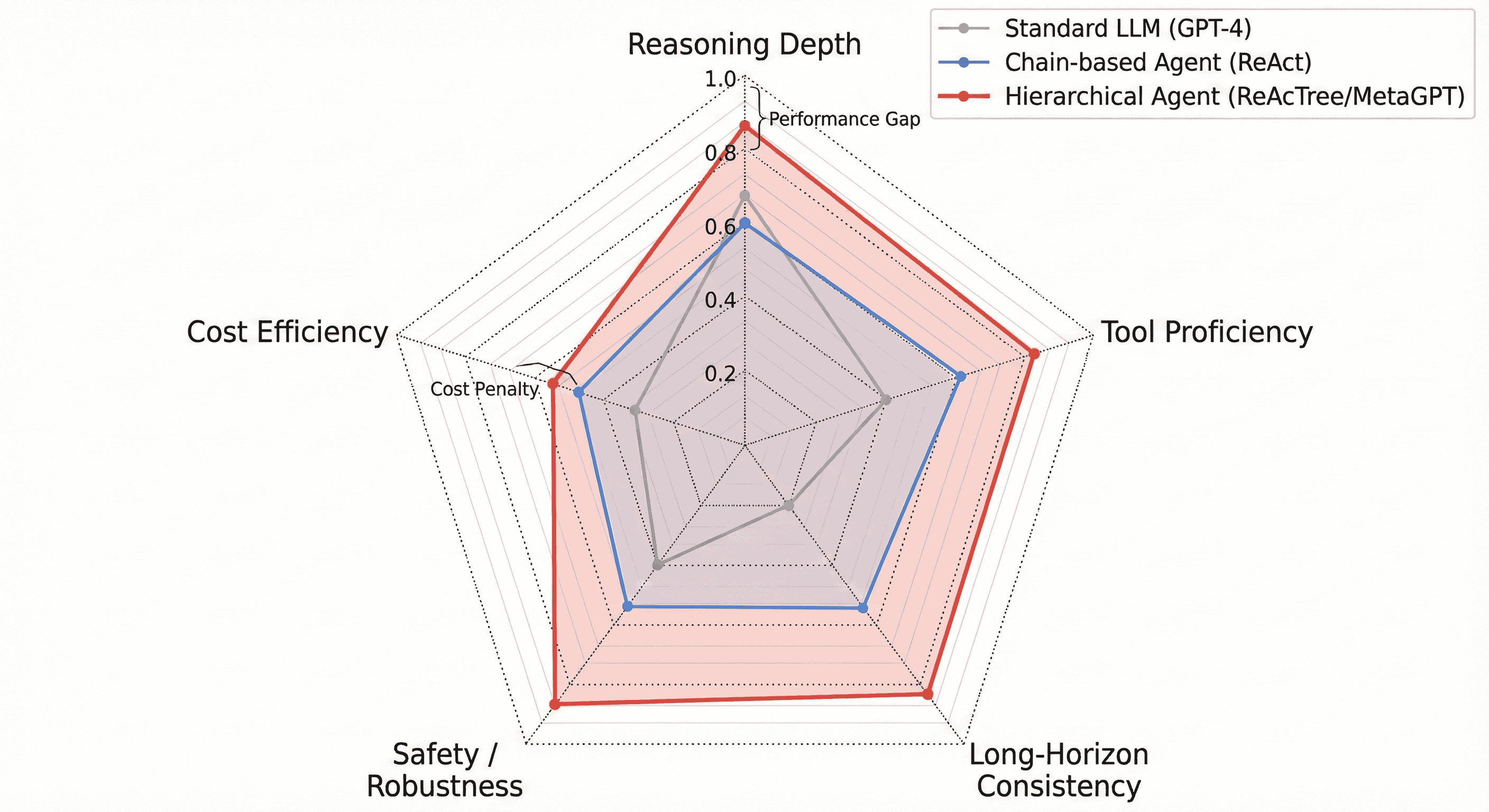

3Evaluation uses 5 CLASSic axes: Cost, Latency, Accuracy, Security, and Stability — and richer reasoning often raises computational cost exponentially versus simple chains.

What This Means

Engineers building automation, platform leads designing safe integrations, and researchers evaluating agent behavior should care — the paper maps concrete architectural choices (memory backends, tool connectors, graph controllers) to real failure modes. Product and security teams can use the checklist-style view to decide where to add permissions, checkpoints, and human approvals before deployment. agent

Key Figures

Fig 1: Figure 1: Taxonomy of the Agentic AI ecosystem. The figure organizes the literature into six main dimensions: Core Components, Cognitive Architecture, Learning, Multi Agent Systems, Environments, and Evaluation. Together, these dimensions trace the field’s progression from simple text based loops to complex hierarchical systems that can operate in open ended environments.

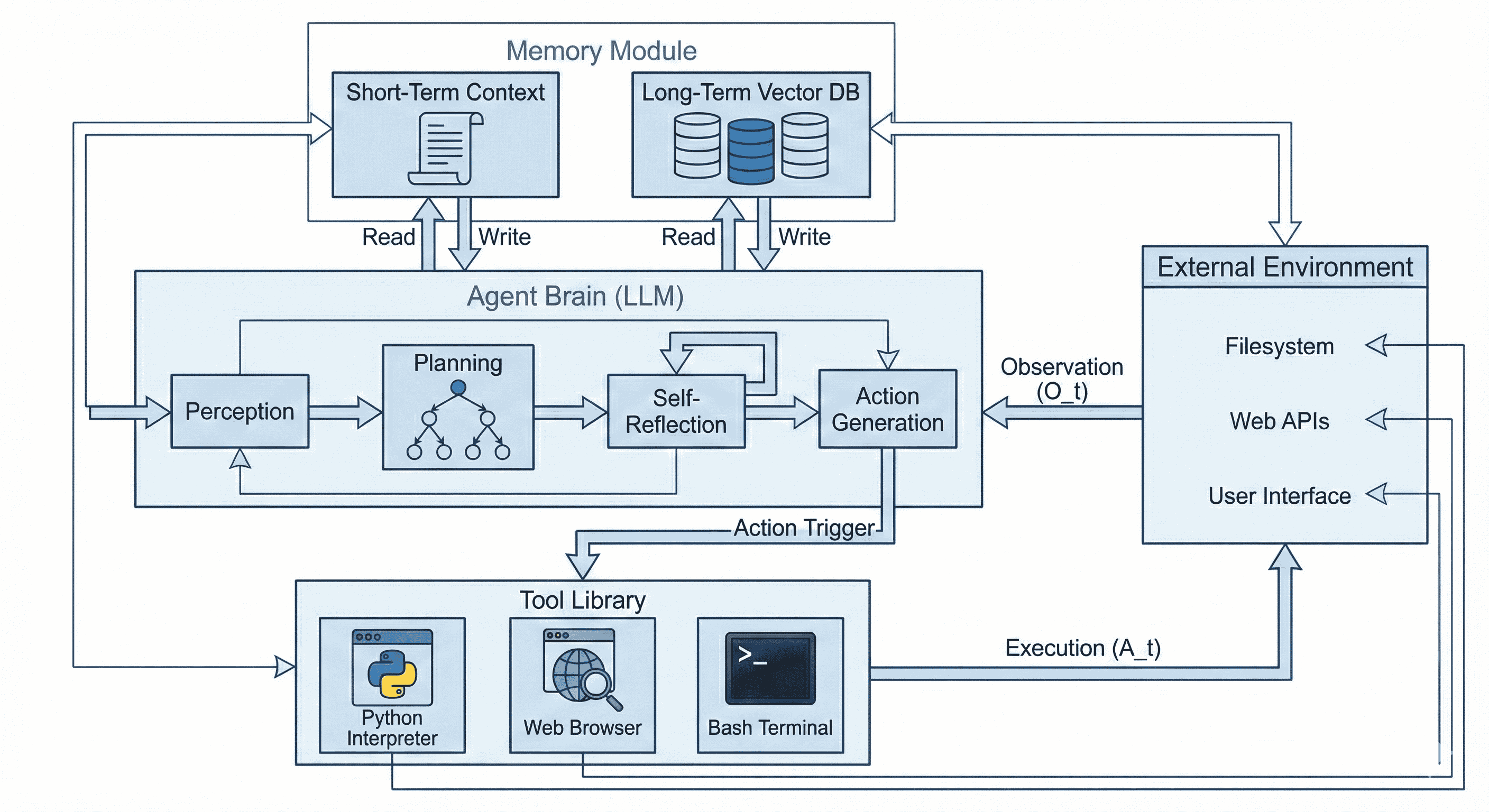

Fig 2: Figure 2: The unified architecture of Agentic AI. The system is shown as a modified POMDP loop. The agent brain at the center transforms each observation ( O t O_{t} ) into a reasoning trace ( Z t Z_{t} ) using hierarchical planning and self reflection. A dual stream memory module at the top supports context retrieval, while a tool library at the bottom executes code based actions ( A t A_{t} ) that change the external environment on the right.

Fig 3: Figure 3: Communication Topologies in Multi-Agent Systems. We classify collaboration patterns into three dominant structures: (Left) Chain Topology, utilized by MetaGPT to enforce Standard Operating Procedures (SOPs) via sequential hand-offs; (Center) Star Topology, employed by AutoGen where a Controller agent dispatches tasks to specialized workers; and (Right) Mesh Topology, used in social simulations like Generative Agents to enable dynamic, unstructured interaction.

Fig 4: Figure 4: Multidimensional Architectural Comparison. We compare architectures across the CLASSic dimensions. While Hierarchical Agents (Red) achieve superior Reasoning Depth and Tool Proficiency, they incur a significant Cost Penalty and Latency compared to standard LLMs (Grey).

Ready to evaluate your AI agents?

Learn how ReputAgent helps teams build trustworthy AI through systematic evaluation.

Learn MoreConsiderations

The work is a broad engineering-focused survey rather than a single benchmark study, so specific numeric performance gains across systems are not provided. Many examples and evaluations come from controlled sandboxes; real-world robustness may be worse on diverse, changing interfaces. Remedies like hierarchical planning and verification verification reduce some failures but often increase computational cost and complexity, so trade-offs must be tested per use case.

Methodology & More

The report reframes modern autonomous systems as complete agent architectures rather than isolated language model calls. It proposes a unified, engineering-first taxonomy that splits agent design into six dimensions — perception, memory, action, profiling, planning, and learning — and ties each to the agent control loop. Practical building blocks discussed include multimodal perception (screenshots, audio, 3D), persistent memory backed by vector stores, flexible "code as action" tool execution, and connector standards that let platforms enforce allowlists and auditing. A central design trend is replacing free-form manager chat loops with explicit orchestration graphs and state machines (flow engineering) so developers can insert checkpoints, approvals, and typed transitions for safer long-horizon work. orchestration graphs

Analysis of multi-agent patterns shows three dominant topologies (chain, star, mesh) and argues that production systems increasingly prefer graph-based coordination for observability and recovery. Evaluation must go beyond text similarity to a multidimensional framework the authors call CLASSic: Cost, Latency, Accuracy, Security, and Stability. Key risks are concrete: hallucinations become destructive actions (wrong API calls, file deletions), indirect prompt injection hides malicious instructions in data or UI, and cascading failures occur when an early planning error is executed downstream. Recommended engineering practices include explicit controllers, robust retrieval and verification before execution, permissioned connector layers, and pre-production testing against realistic UI and adversarial inputs.

Avoid common pitfallsLearn what failures to watch for

Credibility Assessment:

Includes Rajkumar Buyya, a well-known researcher with very high h-index — strong credibility despite arXiv venue.